The natural next step for computing

The natural next step for computing

Setting the scene

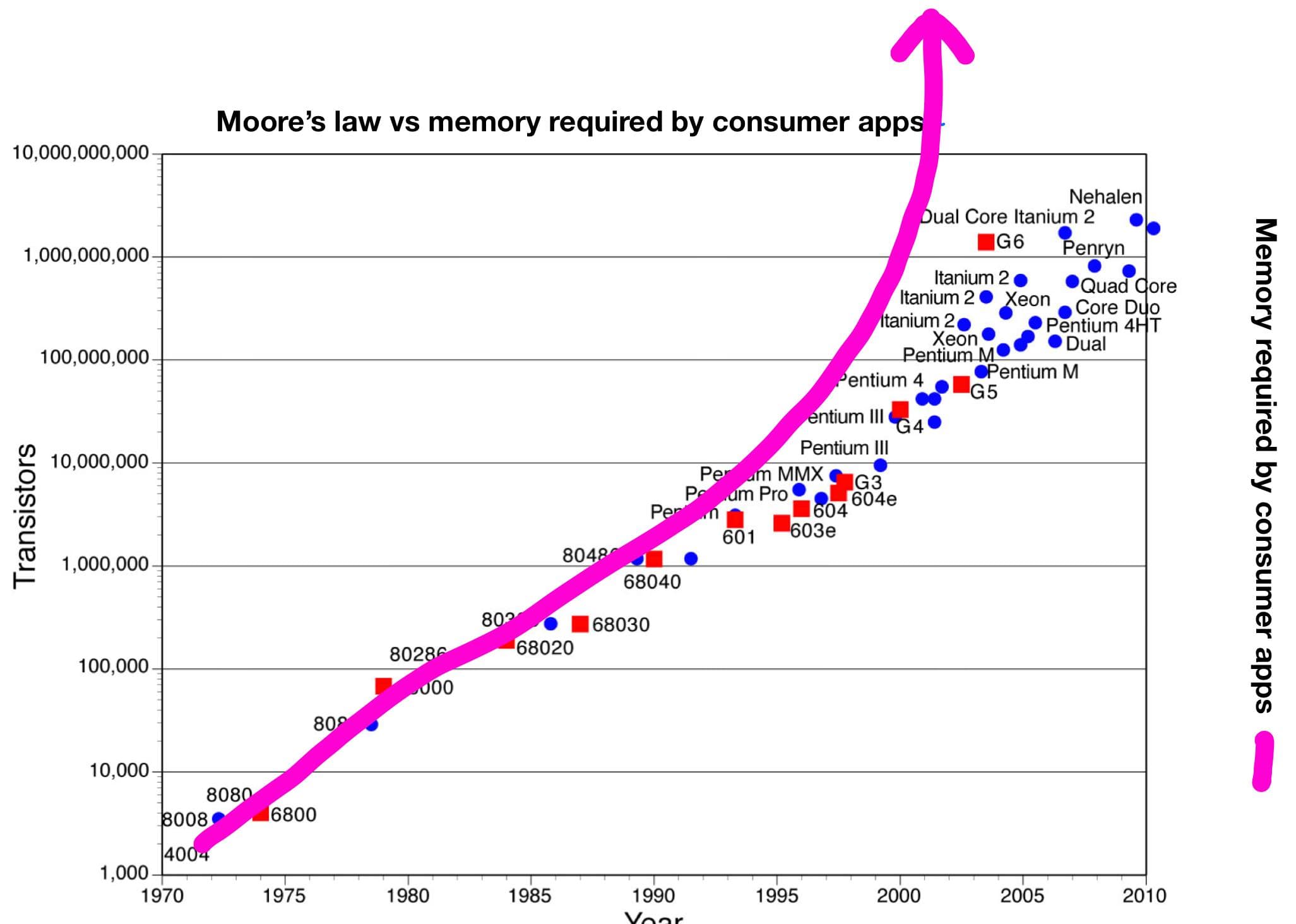

What if I told you the future of your laptop was a 2GB RAM with an i3 CPU? It's a little distressing to think about. Google Chrome would crash the whole thing as soon as you open up your second tab! I read a Tweet recently regarding the increasing memory and processor demands of modern apps. It almost seems as though the amount of bloat we experience on modern desktop applications is outpacing Moores law. Often we have to "manually" manage the memory of our computers doing things like closing tabs, force quitting apps or paying for iCloud....

To be clear, I see limitations with both RAM (Random Access Memory) and disk space on modern computers. My phone has 6GB of RAM but most computers only have 8GB ? I am by no means an expert in these matters, so forgive me if I get some things wrong. However, I am someone that loves trends and as far as I can see, the performance features of physical computers (laptops and desktops) does not seem to be increasing as fast as we need.

Let's say you are running Slack, Google Chrome, Trello, Outlook and maybe some job specific apps such as Excel and IntelliJ. These are bare minimums. What if you have a notepad, and Spotify open? And don't even think about Docker. Your computer is bound to become sluggish and certain apps may even crash. Worse yet, the fan will go on! Am I the only one the fan annoys? Or when I touch the touch-bar on my Macbook and it feels like a stove. Not sure which one is worse if I am being honest.

I don't think I am in the minority here. I have seen plenty of friends and family that it takes 1-2s for a click to do something, or opening MS word takes 30 seconds. Yes these may be extreme examples, but it does happen. If the RAM isn't the issue, then the disk memory is running low. I have seen plenty of Macbook Air's (always seems to be them) with a persistent "Your startup disk is almost full" notification in the top right corner of their computer. (probably because Apple sells 128gb laptops to get everyone paying for iCloud).

You may have never encountered this problem because all you do is browse your favourite social media network. You never have to worry about the specs of your computer again... except the day that your computer is older than 3 years and just starts slowing down. Why is it slowing down? Why now? Why does my battery only last 3 hours now (seperate issue I know but still)

Who wants that? Guess it is time to pucker up and pay $2000 for a new computer. In essence, it becomes a lifetime subscription paying about $2000 every 4 years, and every 4 years the memory requirements for your favourite apps increases. So you don't even get a much better experience! And thats on the lower end, if you want to get some computationally difficult stuff done, that $2000 becomes $4500-$6000 pretty quickly. And for businesses the problem is particularly pronounced. Development team of 10? Thats 20-40k every 4 years. And the worst part is, some people barely use laptops/desktops - but you can't go without having access to them.

Where do we look for solutions?

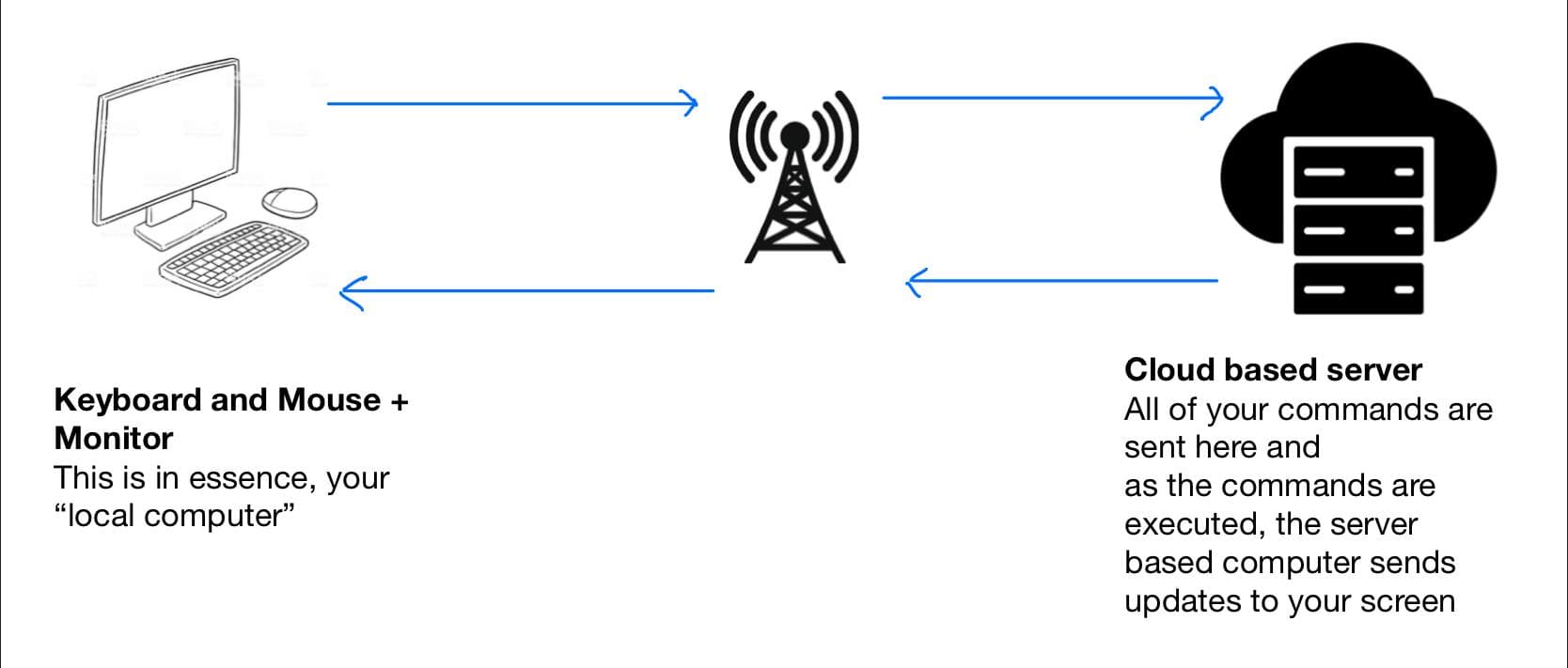

To me, the solution may become within our reach with the advent of 5G and 6G technologies. These latest generation of network infrastructure may mean that we no longer need to have our traditional perception of a computer in one place. We can break it up. We create a device that looks and feels exactly like a laptop (lets call it the Laptop 2.0), but instead of having the excessive CPU, RAM and Disk Space within that device, the only purpose of that device is to send your commands to a server which is running a virtualised instance of your computer. The Laptop 2.0 can still do basic things, but if you're not connected to the internet it will be limited.

Just like AWS does now, we keep the difficult parts of computers in cloud data centres and connect to them with the internet. You don't need to carry around a 32GB RAM Macbook with 1TB of storage. You may need those physical compute resources, but why own the hardware? How viable is something like this ?

Let's consider what a prototypical Laptop 2.0 looks like. In my mind, the Laptop 2.0 has the following features:

- Heavily focused on user experience. Similar to the Macbooks of today, this Laptop 2.0 should be a delight to look at, touch and interact with.

- Little processing power. All we want it to do is be able to run 1-2 apps at a time so that its not completely useless without the internet

- Highly Efficient Network I/O features. We want this machine to be able to relay and receive data as fast (latency) and efficiently (bandwidth) as possible. This means wifi, networking chips and anything else that may effect this.

Essentially, the main purpose of this machine is to serve as a UX focused internet link. You type commands -> sent over the internet to your backup computer -> results of those commands are sent back to you -> your screen is updated. How fast this happens, exactly what happens on the Laptop 2.0 vs the server at a more granular level and the latency are all still up for discussion.

Large providers can sell a LaaS (Laptop 2.0 as a Service) product whereby you only rent a laptop for 2 years at a time. Therefore you no longer need to pay upfront costs, and you pay a subscription of $20 a month for the hardware and $20 a month for the software/cloud portion.

This aside, that laptop will then be used to link up to cloud based "backup computers". In my mind, these would be very similar to the cloud servers we have today. They can share CPU resources and memory (virtualisation), your disks are backed up regularly and you only pay for the time that you use them. You use your computer for maybe 8 hours a day? Pay for only those 8 hours!

On AWS for example you can rent a 8GB RAM Linux machine for $0.13 USD per hour. Or 16GB RAM for $0.26 per hour. The costs also generally check out. If you rent a 16GB machine and actually use it for 10 hours a day every day for 200 days (working days) a year, that will cost about $500. Over the course of 4 years you pay $2000, not 3500 like a 16GB RAM machine would usually cost. On top of that, if the provider is smart, they can implement features which means the 200 days x 10 hours becomes 150 x 5 hours - how much do you really use your computer?

Execution

The problem becomes, how do you architect a distributed machine whereby one half is only accessible via the internet. If the networks we use pass a threshold in terms of bandwidth and latency, it will be possible that we can pass information in the following way, without it being overly noticeable:

- You purchase a plan for a Laptop 2.0. This laptop costs you $20 a month to rent, and the only purpose it serves is high speed internet connectivity and passing commands to the bigger computer running in the cloud

- These commands are passed through the internet and then executed by the machine/server in cloud. This machine can be a physical computer, or a Docker-like abstraction. How this is implemented will determine how much cheap the provider can provide it to you.

- The machine in the cloud is actually running the processes and threads that would usually run on your computer. It then send back commands to your Laptop 2.0 which will update the screen.

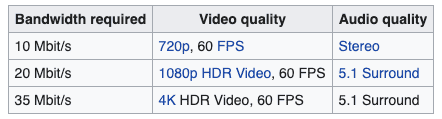

I suppose, now the question becomes about latency. How noticeable will this latency be, and what can we expect in the best case scenario? Google's Stadia has implemented a similar solution, whereby they use any type of device with an internet connection to connect to cloud based servers where the game is actually running. Looking at the sort of latency they require, it does seem theoretically possible to achieve what I am proposing:

4K HDR with only 35Mbits/s ? With 5G we can expect between 50Mbits/s to almost 1GB/s.

According to Wikipedia, we can expect an average latency of 8-12ms for the first few years of 5G, with speeds of 1-4ms having been observed as the theoretical minimum. I conducted a test on the NBN and saw an average between 10-20ms.

Obviously this isn't perfect yet, its an idea that if executed well, could be a big idea. Latency will probably be the main issue, but that can be overcome. For example, you only really need the currently interacting process/app to be in memory on your computer, and you can swap them in and out as you need. This is difficult no doubt, but thats not really the point. Everything in software these days is quite difficult. And difficulty is a very profitable and worthwhile endeavour.

To me this general trend also makes sense. Your computer, and what is on it becomes a more integral part your life every year. Having that backed up and accessible at any time is worthwhile. We would also be able to write API's that can interact with your computer on the cloud, serving as some sort of a personal database. You can then even run software like a virtual assistant on this computer. You can have a headset and talk to it, ask it to do things etc. These all all ideas, but to me the idea of a physical computer is becoming more and more obsolete every day.

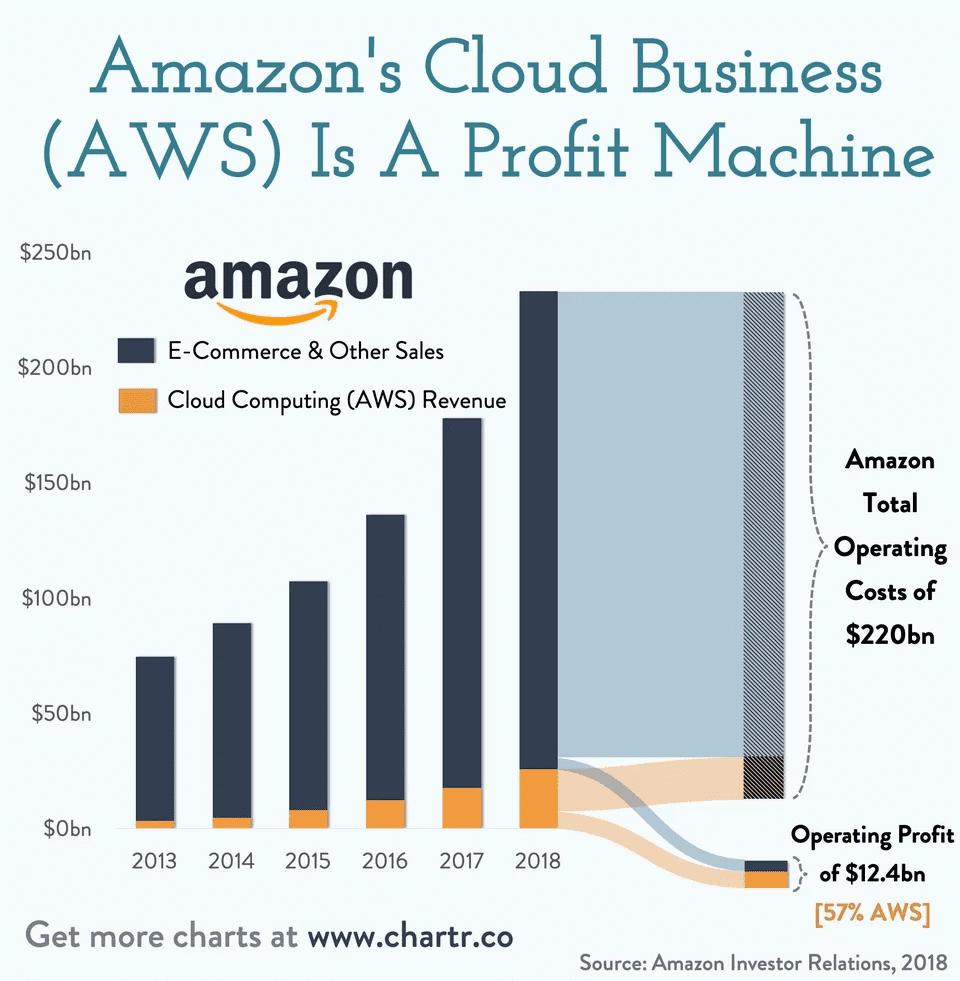

Cloud based software also offers significant economies of scale, just ask AWS:

Of course AWS is a completely different business and theres no point in comparing the two, but it shows how profitable cloud based software (and hardware becomes) even at the scale of Amazon.

In short, we can see the future potential of changing how we architect computers so they involve the internet layer. It wont be easy, but it can provide significant upside for businesses and individuals. As we move to a future where more and more devices connect to the internet, the more accessible your computer becomes, the more effective you can be.